Data Backup – How to Not to Lose Data When Hacked (In Theory)

Maybe you’re wondering how backup is related to computer security? Backing up the data (if you do it properly) does not affect the resilience of your network against hackers and viruses. But if your network is already under attack, the backups have to hold. I would like to share with you what backup proposal we have come up with and what we applied in the end.

What questions have we asked

Just like an antivirus, neither backups were in order. Customers used different software, other backup destinations, different backup times, and backups were variously resistant to data loss. It was still in mid-2015. In the framework of standardization (for reasons and benefits see: “Standardization – Doing IT as Simple as That“), we have finally unified and created best practices for backing up.

When designing our final solution, we have asked ourselves the following questions. Of course, the questions depend on the environment: the number of servers, the amount of data where the data is stored, and the network speed. Otherwise, you will propose a backup for a company that has large archives of relatively unchanging data in one place and otherwise for a company that has predominantly application servers with databases located in different locations. The type of customer we have proposed our solution to is a company with a maximum of dozens of servers and data of up to dozens of terabytes. They have both common shared folders and various information systems on their servers.

How quickly do we restore our data? (Recovery time objective) (property of customer´s interest)

Our goal is to recover the data as quickly as possible (in case of data loss/corruption). During data recovery, the customer can work only partially or not at all. It costs a lot of money (depending on its size and the systems that are not working). When thinking about the speed of recovery, I imagine the following 2 events.

Partial data loss

For example, a user deletes one file/directory by mistake. Normally, this recovery is a quick – the lost part of the data is restored from the backup and is completely irrelevant what your backup technology is. In any case, you need to try to recover the environment in order to capture any circumstances that you can not come up with (for example, a bottleneck on the network or slow backup storage because the backups are stored in 30 increments).

Complete data loss

Complete loss of data on a server or network caused by, for example, failure of the HW server, cryptovirus or hacker attack. Recovery takes more time because it is more data than with the partial recovery. It depends primarily on backup technology. If only data is backed up, selectively without the system (for example, via Cobain Backup), restoration will mean: install the underlying OS, updates, drivers, the information system itself, and then restoring data. It may take a few days. Especially when you need to cooperate with an IS supplier (who, in extreme cases, no longer exists or does not want to cooperate). Therefore, you need to choose a backup SW that backs up all disks / partitions (including the system) and can do a different HW recovery (other disk sizes, disk controller, etc.). Again, it is ideal to test it at first.

How old are our backups? (Recovery point objective) (property of customer´s interest)

Some of our small customers do not mind if they lose 3 days of work. The data can just be re-entered. For other customers, the problem would be to lose 1 hour of work. Because no one can get the changes that have been made in the system in the past hour (it would be too expensive or the changes could not be traced). So we needed a solution that would allow us to back up both once a day (the minimum that we do not want to go below) and several times a day.

When planning the number of backups, you must think about the load back up (except for snapshots) puts on a backup server (drives are loading all the time, and at the beginning, there may be short delays due to VSS copy creation).

Are the backups single technology fail-proof? (3-2-1 rule) (property of our interest)

What happens if there is a bug in some backup SW and the data in the backup is not stored properly? What if the server is broken, are we able to reach the backups? And what if a server room is flooded by a broken pipe / or destroyed by a fire?

3-2-1 backups rule

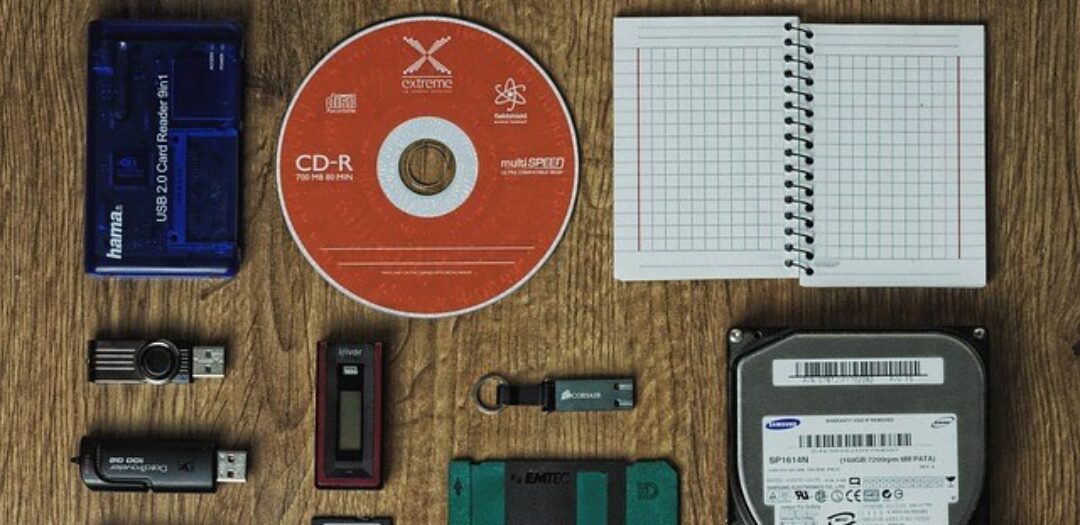

Its ideal to follow the 3-2-1 backup rule. That means to have at least 3 copies of the data (including production copy) on 2 different devices/technologies and 1 copy offsite (in a different location than the other two). I would extend the rule to use more than 1 backup SW (one never knows when a backup SW will fail).

Are backups virus and hacker-resistant? (property of our interest)

What if someone breaks through our network (a virus or a hacker)? Will he make it to the backups, that he could delete? Cryptovirus does not ask and encrypts everything that comes, including backups (external USB disk is not a solution – see Ransomware 2: to Pay the Ransom for Encrypted Data?). It is important that backups are as separate as possible from the production environment. Ideally, you can not delete/change your backups.

Backups are so important that one needs to be almost paranoid (see The darkest threat I am afraid of in IT security) and to expect the unexpected. Loss of production data along with backups can mean the end for a lot of businesses and damage can be in few hundreds of millions! I believe that no one wants to sleep with this in mind. Therefore, it is necessary to include some offline or cloud backups in the backup process.

It is also ideal to make an archive backup (for example, once every six months) and to deposit it somewhere safe. It might be that a patient attacker could deliberately depreciate your backups. E.g. the data would be transparently encrypted in the production environment (which you would not notice), you would back up the encrypted data and when you would have all of the data backed up in backups (that is, you would only have the encrypted data), the attacker would delete the “encryption key“.

Other things to keep in mind

Backups vary according to consistency: inconsistent, crash-consistent, and application-consistent. In simple terms, this is whether the backed up data are undamaged and complete (for example, if there are any unregistered changes in memory). It is beautifully described in the article “VSS Crash-Consistent vs. Application-Consistent VSS Backups“. We aim for always having “application-consistent” backups (the same state as if we would have properly turned off the backup before backing up the data and then backed up the data). You can live with “crash-consistent” backups (as if we shut down the server and then backed up the data). Inconsistent backups are mostly chance of luck.

Another important point is to periodically test data recovery/backup systems and to have a recovery plan. At the time of data loss, you need to be sure you can rely on backups and that you know what recovery procedure is. It’s too late and stressful just to start figuring out the order the servers will recover in, where are the recovery media and drivers.

The issue I do not address is this article is the “confidentiality” of the backups. So far, we, unfortunately, have no standardized solution (we are dealing with it on a case by case basis). If someone wants to share how you solve it, I’d love a chance to learn.

Conclusion

What do you think about it? Did you find any mistake/inaccuracy? Are you solving similar questions about backups, or are you bothered by anything with backups specifically? How often do you perform a trial recovery? Please leave a comment below the article or send me an email.

Backups in real life

I have prepared a follow-up article for you – an article on how we handle backups at PATRON-IT. What specific technologies we trust, how we set them up, where we see the benefits and pitfalls. I have already published the article here: “Data Backup – How to Not to Lose Data When Hacked (In Real Life)“.

Discussion